Applications in Imaging and Performance Risk Management

The pharmaceutical industry and regulators alike have acknowledged for some time that the clinical development process is in need of an overhaul.

The pharmaceutical industry and regulators alike have acknowledged for some time that the clinical development process is in need of an overhaul.

Companies must find a way to innovate more efficiently to meet market demands and remain competitive. The answer lies in going beyond making incremental improvements with “sustainable technology" to adopting “disruptive technology" that upends the traditional ways of working.

Artificial Intelligence (AI), enabled by a scalable, cloud-hosted modern data platform, has the potential to disrupt practically every stage of the clinical development process. Clinical trial sponsors that leverage AI in their development programs benefit from greater insight into treatment safety/efficacy, which leads to better informed decision making and ultimately reduces the time needed to bring life-saving treatments to market.

In particular, there are applications for AI in imaging and in performance/risk management, both of which can speed up clinical trials and reduce overall development costs.

First, a few words about how a modern data platform and data infrastructure enable AI in clinical development.

Companies cannot take advantage of disruptive technologies such as AI without first having the right underpinning of a scalable data platform. Such a platform enables real-time ingestion, integration, enrichment, and curation of structured, semi-structured, unstructured and binary data (all of which are proliferating in clinical trials). AI models must be “trained" following repeatable processes, including integrating them into applications for smart decision-making.

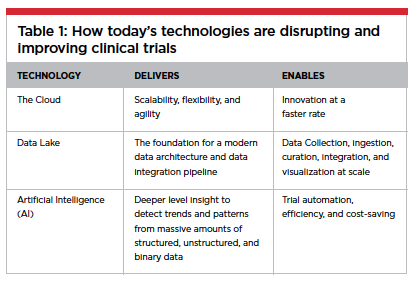

This is where the dynamic trio of AI, a modern data platform and the cloud come in (Table 1). To that end, a data lake (a data platform founded and built on the principles of modern architecture and cloud computing) that amasses data from disparate sources, in one place in raw form, can be the foundational data layer serving up data integration, AI and machine learning (ML) at scale.

The establishment of effective data governance coupled with data privacy and protection within the data lake is equally important. Effective data governance  helps maintain the quality of the data over a sustained period of time and brings disparate teams and systems together, preventing the data lake from becoming a data swamp.

helps maintain the quality of the data over a sustained period of time and brings disparate teams and systems together, preventing the data lake from becoming a data swamp.

In a nutshell, a modern data platform such as an enterprise data lake streamlines the steps of data integration throughout the entire clinical data journey. And, with data governance and data quality in mind, establishes a foundation on which AI-driven applications can be built upon to gain operational efficiency and enhanced automation.

In offering a harmonized view of clinical data across sources and applications, the right platform provides an integrated experience for clinical development, opening up new possibilities for trial management.

Specifically having data readily accessible within the data lake gives research teams the ability to:

Measure a compound’s safety and efficacy

Evaluate study performance against key metrics

Monitor the clinical program and assess risks proactively

Use data analytics to quickly select sites and identify patients

Analyze data at the therapeutic level to predict how a product will perform in the market

Focus on research and analysis (rather than on data wrangling)

Employ AI-enabled “conversational interface" or bots for patients, sites and internal operation

The architecture must support a scalable data pipeline that can quickly transfer and transform data. It must also provide a platform for algorithms to train, test, and validate multiple statistical models quickly and efficiently — the basic process of ML, which can be used to detect trends and patterns in massive amounts of data.

Given the volume, velocity, and variety of data captured and managed in today’s clinical trials, this big data problem “screams" for a solution that offers scale and performance. Housing a data lake in the cloud with serverless computing offers the scalability, flexibility, and agility to address these needs and enables biopharma companies to take the next step in leveraging the advantages of AI throughout clinical development.

Image Interpretation

In many therapeutic areas, imaging is essential to screening patients, determining stage of disease, and measuring therapeutic response. However, detecting subtleties in images and maintaining consistency in how they are interpreted has always been a challenge. In the not-too-distant future, AI may be used to assist in evaluating images across a variety of imaging modalities (MRIs, PET-CT scans, etc.). And, although it is likely that the technology will initially augment human assessment, eventually the equation may be reversed. The day may come when human assessment augments computer reads, especially in low-risk disease areas.

In many therapeutic areas, imaging is essential to screening patients, determining stage of disease, and measuring therapeutic response. However, detecting subtleties in images and maintaining consistency in how they are interpreted has always been a challenge. In the not-too-distant future, AI may be used to assist in evaluating images across a variety of imaging modalities (MRIs, PET-CT scans, etc.). And, although it is likely that the technology will initially augment human assessment, eventually the equation may be reversed. The day may come when human assessment augments computer reads, especially in low-risk disease areas.

This transfer will happen gradually over time as AI systems “learn" and develop more refined capabilities. ML is a technique within AI through which software algorithms are trained to act on data. The learning requires massive amounts of data and an iterative process of human correction and refinement. In this case, an AI image reader would require years of historical image data across disease states and image modalities.

Such a system would employ automated scoring criteria and calculations, and could be expected to deliver greater imaging accuracy, increased speed, and reduced costs.

Trial Oversight and Performance Management

Sources of performance risk in clinical trials abound, encompassing everything from data quality to patient enrollment, retention, and compliance to site performance. For such risks to be managed successfully, sponsors must shift their mindset from one that is reactionary and resource-driven to one that is active and data-driven. Having the right technology in place can be a catalyst for making the change.

An effective performance risk strategy integrates data from multiple sources into an easy-to-access platform that supports real-time decisions, aided by AI. A  technology platform that centralizes data and related action items provides the transparency that study teams need to monitor the status of their trials with confidence.

technology platform that centralizes data and related action items provides the transparency that study teams need to monitor the status of their trials with confidence.

With AI, however, risk management can go beyond monitoring, to actually averting problems by predicting where and when a situation is likely to cause an issue. An intelligent system can predict performance based on a set of known facts and observed trends. Then, system-generated alerts can notify study teams of signals — at the portfolio, country, study, or site level — of emerging issues that could endanger the safety, quality, compliance, or timeliness of their studies. AI can also point to the best remedy for the situation, and action items can then be assigned to the appropriate stakeholders to mitigate the situation proactively and efficiently.

For example, AI can detect a patient who is at risk of not complying with the study protocol, based on thousands of data points collected across multiple trials. These data points might include the patient’s timeliness to date in completing assignments, timeliness in making study visits, and the quality of the patient’s assessments using electronic Patient Reported Outcome (ePRO) devices.

The system may report, for instance, that a patient’s probability of completing the study drops from 90% to 70% after a certain pattern of behavior (such as missing scheduled site visits, or variable response time). The system may consequently recommend that the notification frequency prior to a scheduled visit be increased from one to three.

Conclusion

The technology capable of transforming clinical development in these ways is rapidly becoming available. Even so, sponsors should consider their adoptions a journey; it is the nature of all technology to improve with time, and most especially with AI, as ML systems require training and ongoing refinement. Sponsors that have an open data culture, are comfortable with data hosted in the cloud, have institutionalized data governance, have an appetite for change, and are willing to take risks will be able to embrace AI more easily.

It goes without saying that you must have access to data to deploy AI/ML solutions, and the ability to easily collect critical data in increasing volume and frequency requires the right patient- and site-facing technologies along with the infrastructure outlined above.

We are already moving in that direction with the expansion of patient reported outcomes, along with the opportunities sensors and wearables provide. We need to put patients at the center of the equation and get there faster.(PV)

~~~~~~~~~~~~~~~~~~~~~~~~~

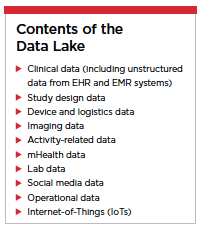

Contents of the Data Lake

Clinical data (including unstructured data from EHR and EMR systems)

Study design data

Device and logistics data

Imaging data

Activity-related data

mHealth data

Lab data

Social media data

Operational data

Internet-of-Things (IoTs)

~~~~~~~~~~~~~~~~~~~~~~~~~

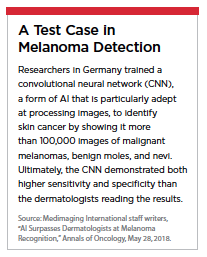

A Test Case in Melanoma Detection

Researchers in Germany trained a convolutional neural network (CNN), a form of AI that is particularly adept at processing images, to identify skin cancer by showing it more than 100,000 images of malignant melanomas, benign moles, and nevi. Ultimately, the CNN demonstrated both higher sensitivity and specificity than the dermatologists reading the results.

Source: Medimaging International staff writers, “AI Surpasses Dermatologists at Melanoma Recognition," Annals of Oncology, May 28, 2018.

ERT is a global data and technology company that minimizes risk and uncertainty in clinical trials, so that you can move ahead quickly – and with confidence.

For more information, visit ert.com.